Thank a gamer for the aid of AI

You can listen to the article by viewing it on Substack.

Next time you’re enjoying how helpful AI is — drafting emails, analyzing data, summarizing meetings, or helping you think through strategy — you might want to quietly thank… video gamers from 2012 and earlier.

Seriously.

The AI revolution we’re living through didn’t just appear out of nowhere.

It runs on GPUs.

And GPUs were originally built for one thing: rendering video games.

Long before “AI copilots” and “frontier models” were buzzwords, gamers were the ones pushing hardware companies to make faster, more powerful graphics cards. Higher frame rates. Better textures. More immersive worlds. Every new game demanded more compute, more parallel processing, more raw power.

That demand created a massive commercial market for GPUs.

And that market funded the hardware innovation that modern AI depends on.

Researchers in the late 2000s realized something profound:

The same chips designed to render complex 3D scenes were incredibly good at parallel math — the exact type of computation needed to train neural networks. Instead of drawing pixels, GPUs could multiply matrices at scale. Suddenly, training models that once took weeks on CPUs could be done dramatically faster.

Then came a quiet but pivotal shift in the 2007–2012 window.

Researchers like Alex Krizhevsky (working with Geoffrey Hinton in Canada) began rigging Nvidia GPUs for deep learning workloads when most of the field was still using CPUs. At the time, this was unconventional — even a bit scrappy. GPUs were seen as gamer hardware, not “serious” scientific infrastructure.

But the performance gains were too large to ignore.

Because GPUs could train much larger neural networks on far more data, teams using GPU-accelerated methods started dominating predictive tasks and pattern recognition benchmarks. What had been computationally impractical suddenly became feasible.

This all culminated in the watershed 2012 ImageNet competition, where AlexNet (trained on Nvidia GPUs) didn’t just win — it crushed prior approaches by a shocking margin. That result didn’t just advance computer vision. It triggered a field-wide pivot to deep learning.

And the hidden hero wasn’t just the model architecture.

It was GPU compute — originally scaled and commercialized for gaming.

So when we talk about today’s AI breakthroughs — large language models, copilots, real-time analytics, AI-on-your-data experiences — we’re standing on top of a hardware curve that gaming economically justified years before AI became a trillion-dollar narrative.

You could reasonably argue:

If gamers hadn’t spent the 1990s and early 2010s demanding ever-better graphics cards, the incentive to invest billions into GPU R&D might have been far weaker. And without that curve, modern AI progress may have arrived much later.

I was thinking about this while listening to Elon Musk’s biography.

There’s a moment where it mentions he interned at a video game startup in college and genuinely loved it — but chose to focus on what he believed would serve humanity more directly. Fast forward, and he now runs xAI, building frontier models that depend on massive GPU clusters.

Ironically, even if he had stayed in gaming, he likely would have ended up adjacent to AI anyway. The technical lineage overlaps more than people realize.

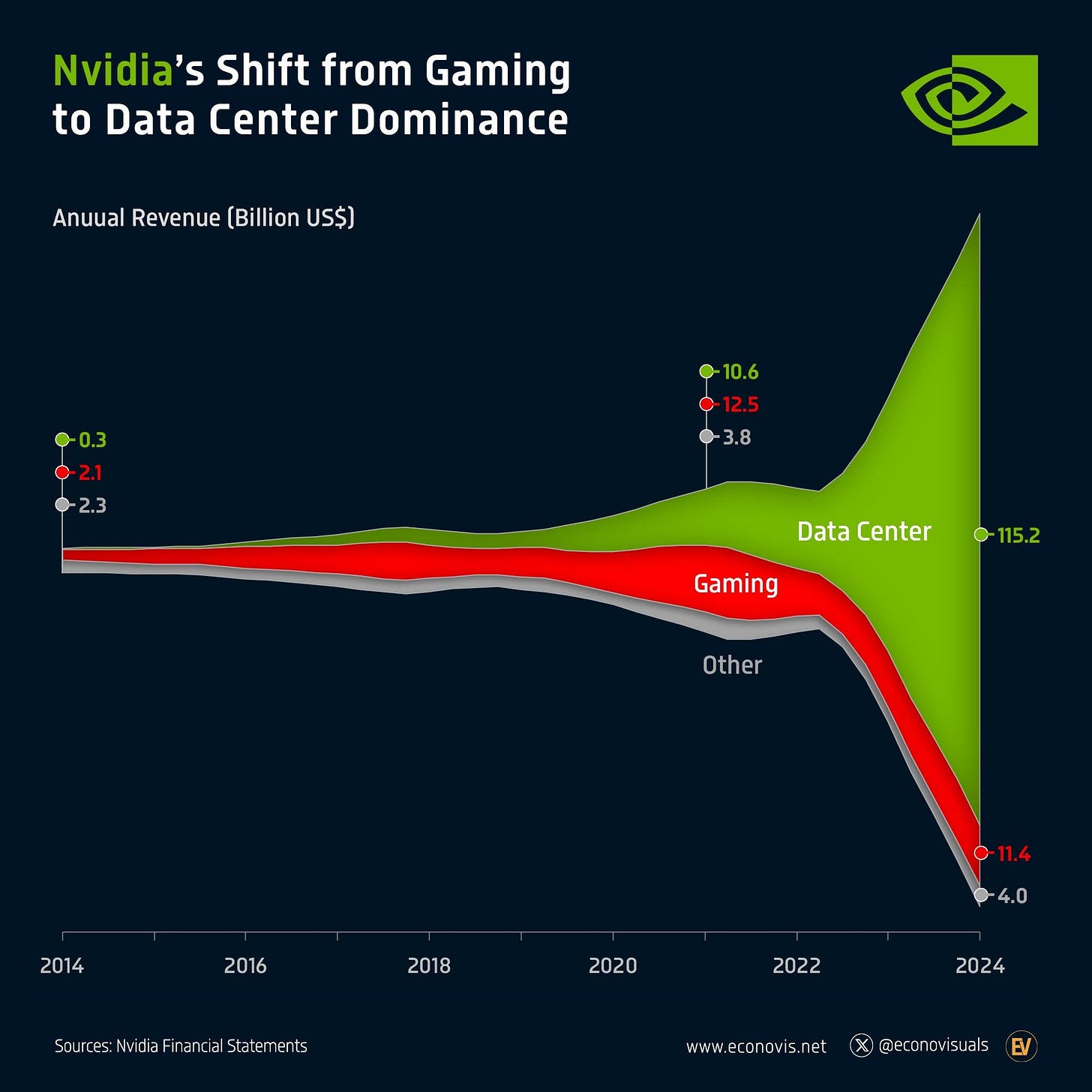

And the corporate arc tells the same story:

Nvidia, a company founded to make graphics chips for gamers, is now one of the most strategically important companies in the world (and the largest market cap) — because the exact architecture that made games beautiful also made AI scalable.

So the hidden lineage looks something like this:

Gamers (pre-2012) demanding better graphics → Explosive GPU market → Researchers repurposing GPUs for deep learning → 2012 breakthrough results → Deep learning mainstream → Modern AI copilots and frontier models.

Not a straight line.

But a very real one.

In a strange, roundabout way, the kids optimizing frame rates in 2008 were helping build the infrastructure that now powers billion-dollar AI systems.

So… maybe it’s time you called your son and told him thank you for his passion for video gaming.

It wasn’t a waste of time.

He was just trying to help humanity — and you couldn’t see it. 🎮😄

If you want to hear more about this video game > AI arc, I highly suggest Acquired’s series on Nvidia. The first of three episodes is here.

https://www.acquired.fm/episodes/nvidia-the-gpu-company-1993-2006

Let’s Turn Your Data into Growth

No more guesswork or missed opportunities.